What Is The Future Of Robotic Grasping & Manipulation?

For robots to be useful, they need to be able to grasp and manipulate things in the real world; while great strides have been made, there is still much to do.

Aaron’s Thoughts On The Week

"A simple handshake would give them away." - Dr. Robert Ford in the TV Show Westworld

Anthony Hopkins' character in the 2016 science-fiction series "Westworld" played the role of Dr. Robert Ford, who pointed out a unique imperfection of the park's robotic hosts - their hands. In "Westworld," the hosts were made to look like humans, but their hands were not as sophisticated. This detail highlights an age-old problem in robotics: replicating human hand movements and manipulation is a complex challenge.

This theme is also seen in another iconic science-fiction narrative, Skynet, where the creation of artificial intelligence started with the reverse engineering of a futuristic hand. Here, the movies may be on to something besides scaring us to death about killer robots - mastering robot hand design is a crucial step towards achieving advanced autonomy in robots.

The third industrial revolution introduced robots to production lines, revolutionizing manufacturing. Today, we are in the midst of another revolution driven by artificial intelligence (AI), which enhances the quality of life through virtual assistants and has even played a role in managing global crises like finding a vaccine for COVID. However, transitioning robots from assembly lines to more dynamic environments requires mastering new skills that involve sophisticated interaction with physical environments, especially those designed for humans.

Over the past decades, robotic manipulation has made significant progress thanks to academic, industrial, and governmental interests. Major strides have been made in improving how robots perceive, plan, and execute tasks that involve manipulating objects. This includes everything from simple pick-and-place operations to complex assembly tasks. Despite these advancements, challenges persist, particularly in executing these tasks in varied, real-world settings.

One significant issue lies in the mechanical and control aspects of robotic hands. While reducing complexity, simple grippers and vacuum cups often fail to handle objects with the dexterity required for nuanced tasks. More human-like hands that feature movable thumbs and articulate fingers introduce a new level of operational complexity and require sophisticated sensing technologies that are still a bit underdeveloped.

On the algorithmic front, the manipulation tasks involve a series of interconnected processes that are not easily adaptable across different robotic platforms. Small environmental changes can drastically affect performance, highlighting the inadequacy of current models to generalize across various settings.

Generative models, such as those used in deep learning, are starting to offer some hope by learning from vast amounts of data to predict and adapt to new scenarios. However, these, too, require extensive data that is difficult and expensive to collect.

Moreover, perception challenges such as dealing with occlusions or handling materials that are difficult to grasp due to texture or reflectivity complicate interaction. Robots need to learn to manage these uncertainties effectively, adapting their real-time strategies based on sensory data's reliability. This involves integrating uncertain perception into planning algorithms to adjust actions dynamically—a capability still in its infancy.

Recent developments in AI and robotics have also brought attention to the potential of physical human-robot interaction (pHRI), where humans and robots collaborate on tasks. This includes remote operation in hazardous environments and wearable robotics like prostheses and exoskeletons to enhance human capabilities. However, the interfaces for these technologies often require extensive training and are limited by the current state of sensory feedback and control algorithms.

Bottom Line: This Is Hard Stuff

Don’t get down on what appears to be the current state of this field. There is a lot of work taking place around robotic grasping and manipulation, and we are all moving the needle in many areas. The key takeaway is that while we are seeing some great things and some fantastic videos, all of this still requires very hard work.

We humans take grasping and manipulation as a second thought. We think that if it is easy to us, then it should be easy for robots. Not to get to much into Moravec's Paradox, but we must stop thinking this way. If it is a lack of training data or mechanical or electrical issues, we need to be open and honest about what is holding robots back. We solve another part daily, but we need more collaboration and coordination to accelerate these efforts.

If we want to see robots in as many applications as possible, the ability to grasp and manipulate various items will be essential.

Getting Things Done

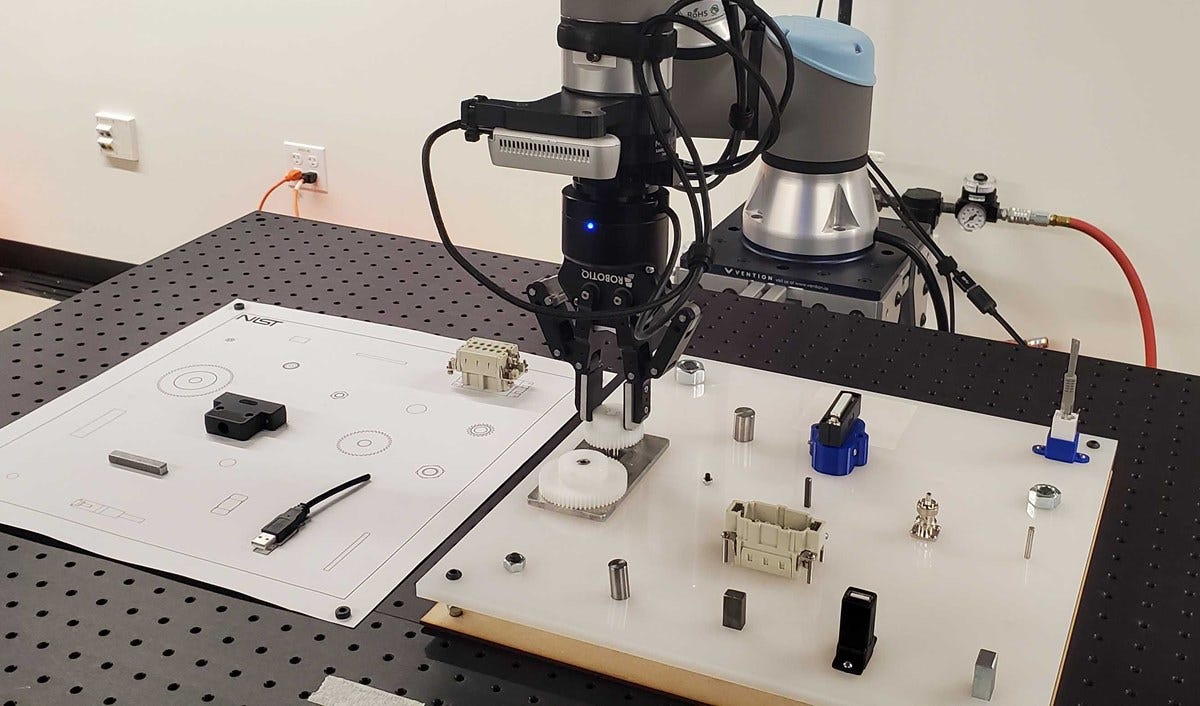

One area that will help grasping and manipulation is the introduction of new standards. ASTM International’s F45.05 Grasping & Manipulation subcommittee was formed in 2023 and will start publishing its first batch of test and performance standards this year.

These standards are based on years (if not decades) of research at NIST in the US. Currently, the subcommittee has broken its focus into three areas:

Baseline Grasping and Manipulation - finger strength, repeatability, etc

Mobile Manipulators - manipulators that are part of a mobile system

Assembly and Disassembly - leveraging the NIST assembly boards to test assembly tasks.

Many researchers have leveraged the work at NIST to advance their own grasping and manipulation work. This is why it is being formalized into International standards to help other researchers leverage the work and the start to create true apple-to-apple comparisons between research projects based on shared baselining standards when testing the performance of the projects.

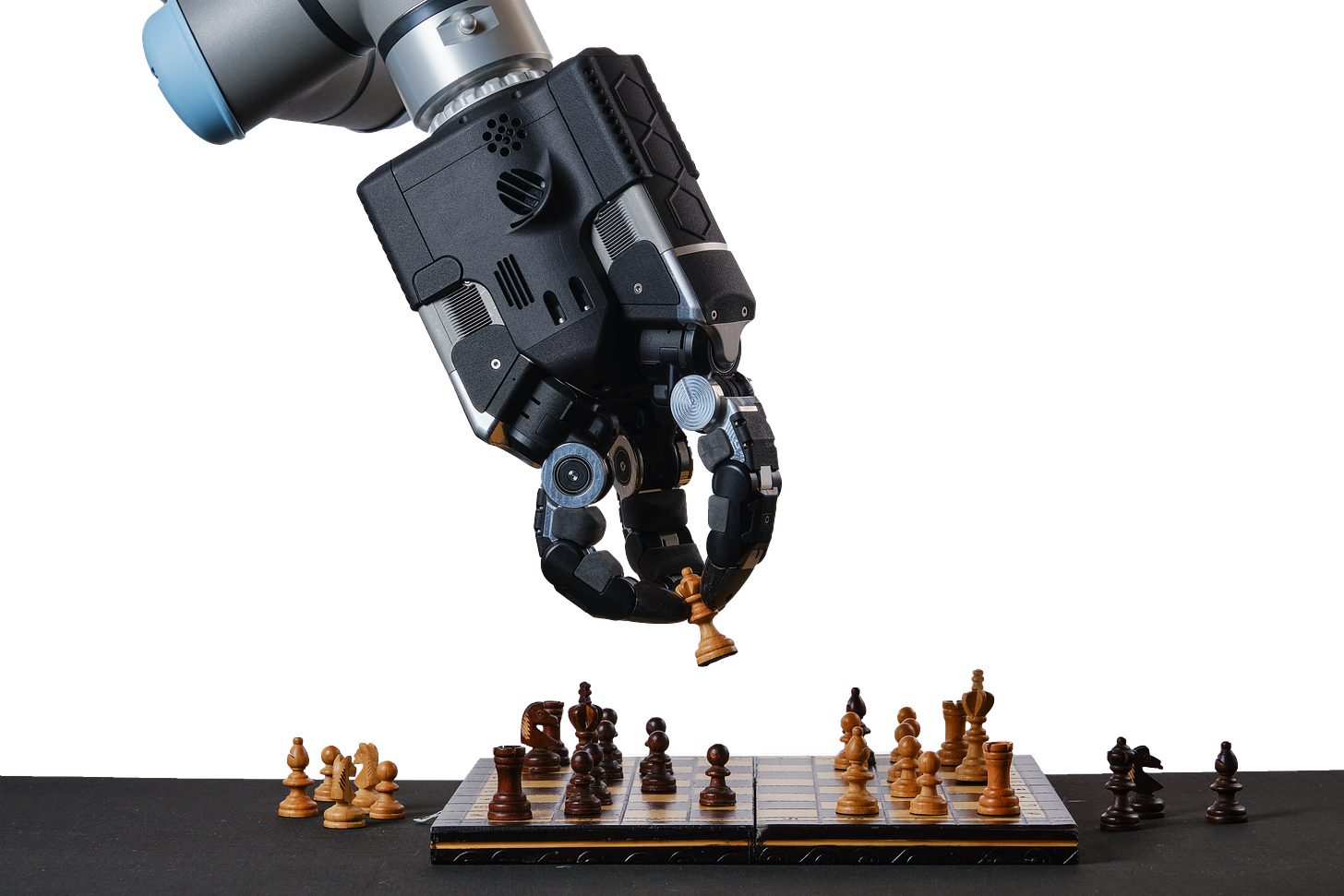

Then there are companies like Shadow Robot, which is doing some amazing work in creating new graspers and manipulators that address both the hardware and software challenges. We made their new robot, “hand,” our video of the week, so make sure to check it out.

Shadow Robot collaborated with Google DeepMind to develop a new Shadow Hand, a robotic hand with fast, flexible and precise finger movements, equipped with advanced tactile sensors to understand its environment through the sense of touch. The hand has precise torque control, achieved with internal 10 kHz force control loops, making it reliable for long-running experiments.

This is the type of collaboration we need to see. Google DeepMind’s work in grasping and manipulation is well documented, and they saw that they needed something much more advanced and better to really start creating real-world solutions. So, teaming up with Shadow Robot on this new hand could, and I will say will, get them over the proverbial roadblocks they are facing.

Putting a Bow on It

Looking forward, roboticists aim to overcome the limitations of manipulation and interaction, pushing the boundaries of what's possible. The next decade promises advancements that will likely see more intuitive interfaces, improved sensory feedback systems, and more adaptive control strategies that could transform how robots interact with their environments and humans. As these technologies evolve, they will not only expand the capabilities of robots but also enhance their practical applications in everyday life and complex, dynamic environments.

Collaboration between researchers and then with industry partners are going to be the pathways that create more advances. Organizations like NIST and ASTM will continue to support efforts to create benchmarks that allow for researchers to end-users to benchmark and find the best solution for their grasping or manipulation application. It really take a global effort to teach robots to move items like we move items.

We are definitely advancing, but we still have not duplicated the greatest grasper/manipulator that changed the world we know - our own human hand.

Robot News Of The Week

NVIDIA invests in AI-powered weed zapping ag-tech startup Carbon Robotics

Seattle-based startup Carbon Robotics has raised an undisclosed amount from NVIDIA's venture capital arm, NVentures, for its AI-powered farming solutions. The company has so far raised $85 million in funding and has developed a "LaserWeeder" that can detect and eliminate weeds with lasers by relying on artificial intelligence. The device uses 24 NVIDIA graphics processing units and can get rid of 5,000 weeds per minute, reducing weed control costs by 80% while increasing crop yield and quality. Other Pacific Northwest startups in the ag-tech field include Aigen, FarmHQ, Pollen Systems, TerraClear, and more.

Rifle-Armed Robot Dogs Now Being Tested By Marine Special Operators

The US Marines are testing robotic "dogs" developed by Ghost Robotics for various applications like reconnaissance and surveillance. Onyx Industries is providing gun systems for remote engagement. MARSOC clarifies that weaponized payloads are just one of many use cases being evaluated and adheres to all Department of Defense policies concerning autonomous weapons.

Two-armed InductOne from Plus One Robotics designed for parcel induction

PlusOne Robotics has launched InductOne, a two-armed robot designed to optimize parcel singulation and induction in high-volume fulfillment and distribution centers. InductOne is equipped with an individual cup control gripper that can handle a wide range of parcel sizes and shapes. It avoids picking non-conveyable items, allowing them to automatically convey to a designated exception path and preventing the robots from wasting precious cycles handling items that should not be inducted. The dual-arm design of InductOne significantly outperforms single-arm solutions, and it can handle parcels weighing up to 15 lb. and up to 27 in. in length.

Robot Research In The News

Robotic system feeds people with severe mobility limitations

Cornell researchers have developed a robotic feeding system that uses computer vision, machine learning, and multimodal sensing to feed people with severe mobility limitations. The system's robot is outfitted with real-time mouth tracking and a dynamic response mechanism that enables it to detect the nature of physical interactions as they occur and react appropriately. In a user study spanning three locations, the robotic system successfully fed 13 individuals with diverse medical conditions. The potential to improve care recipients' level of independence and quality of life is promising.

Microrobotic Swarms Tackle Microplastics and Bacteria Pollution

In a recent study published in the journal ACS Nano, researchers reported how swarms of microscale robots, or microrobots, collected microorganisms and plastic fragments from water. The bots were then cleaned and put to use again.

Robot Workforce Story Of The Week

'AI can help people keep physical jobs for longer'

A company in the UK is using AI to extend the working life of people with physical jobs. Technicians at German Autowerks were filmed while working, and AI analyzed the video to identify pressure points and potential problem areas on the body. The company then used the information to select specific exoskeletons for staff to wear. These exoskeletons are powered harnesses that take some of the strain of the job away from the body, helping to prevent muscular-skeletal problems. The AI technology was provided by Hertfordshire-based Stanley Handling, which believed systems such as these would become standard Personal Protection Equipment (PPE) in the future.

Robot Video Of The Week

We are going to give Shadow Robot the “Video of the Week” recognition over their new robot “hand.” The UK-based company has developed a durable robotic hand that performs fast finger movements and can withstand intense damage. Google DeepMind is already using it in robotics experiments to train artificial intelligence. The hand can close within 500 milliseconds and apply up to 10 newtons of force in a fingertip pinch.

Upcoming Robot Events

May 13-17 IEEE-ICRA (Yokohama, Japan)

June 4-5 Smart Manufacturing Experience (Pittsburgh, PA)

June 24-27 International Conference on Space Robotics (Luxemborg)

July 2-4 International Workshop on Robot Motion and Control (Poznan, Poland)

July 8-12 American Control Conference (Toronto, Canada)

Aug. 6-9 International Woodworking Fair (Chicago, IL)

Sept. 9-14 IMTS (Chicago, IL)

Oct. 1-3 International Robot Safety Conference (Cincinnati, OH)

Oct. 8-10 Autonomous Mobile Robots & Logistics Conference (Memphis, TN)

Oct. 15-17 Fabtech (Orlando, FL)

Oct. 16-17 RoboBusiness (Santa Clara, CA)

Oct. 28-Nov. 1 ASTM Intl. Conference on Advanced Manufacturing (Atlanta, GA)

Nov. 22-24 Humanoids 2024 (Nancy, France)